This is a guest post article by Alex J. Coyne

The thought of identity theft alone is scary enough to most, but a phenomenon called deepfakes add an entirely new element to a crime that’s been around for centuries.

Deepfakes present a terrifying reality where scammers can steal more than just your identity: Technology could allow them to steal your likeness and make it do almost anything they want.

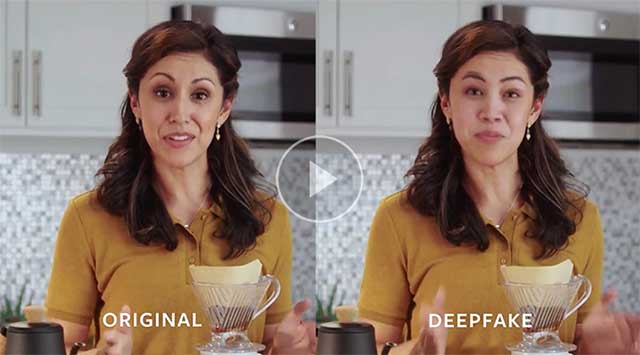

So-called “deepfakes” are the new face-swap, special software and apps that allow algorithms to forge together images of someone’s face together with a video – creating, essentially, a digital puppet with the creator of the deepfake as the puppeteer.

This technology has been used to create famous examples like a video of Facebook creator Mark Zuckerberg; it’s also been used to produce large-scale internet pornography with celebrity likenesses – none of which are real, but many of which can appear close.

How does deepfake technology work, what are the legal impacts and in how much danger is the average person?

image credit: cnet.com

E-tymology

As a writer, etymology remains eternally fascinating – and when it comes to deepfakes, it turns out that the name hides its origin. The term is derived from the Reddit user (deepfakes”) who uploaded a series of his creations in 2017. The user’s account was later banned, but the idea had already taken root

Machine Learning Versus Deeper Thought

Deepfakes are created with the help of AI: Behind the scenes, it uses what’s called “deep learning.” It’s different from regular machine learning because it relies on several neural networks – one passing information to the other, thinking almost like a brain – instead of regular machine learning, which works through structured data (and usually isn’t capable of such fluid thought).

Coincidentally, deep learning is also what allows artificially intelligent computers learn to spot pedestrians and avoid accidents; it’s what allows computers to compete with human bridge players… But deepfakes seem to be the darker end of the spectrum for what deep learning can be applied for.

The Danger Of Deepfakes

Deepfakes have so far, mostly affected celebrities – and this is just because there are source images available of celebrities taken from every angle, and with almost every facial expression.

It’s not a far leap, though, to imagine that such technologies could be used to exploit anyone, especially considering how many photographs most people stash across their social media platforms.

Laws have been proposed in Congress to control deepfakes – one method legally compels a watermark on a fake or imitation – but this proposed law would be impossible to enforce in the digital world.

Deepfakes Now

image credit: ai.facebook.com/blog

Most deepfakes out there right now are obvious for two reasons: Some fall into the category of the first, where common sense says it’s obvious that Clinton’s face doesn’t belong on Trump’s body or the other way around – but others, any deepfake that isn’t made with the idea of being funny or quirky, is harder to spot by the “simple common sense” method.

Upon close analyses, perhaps not always to the naked eye, deepfakes can still be spotted. Things like how they blink or how lines blur can sometimes give away the fact that what you’re looking at isn’t real – and of course, deepfakes fall apart entirely when they are subjected to proper forensic examination.

Tools like Reality Defender (created by the AI Institute) are there to attempt to spot images or videos that are known to be fake, as an especially useful tool for journalists. Facebook launched the Deepfake Detection Challenge to help develop further algorithms which could spot real from not.

At the very least, it emphasises the importance of not taking anything – even something that looks real at first – at face value.

Victims Of Deepfakes

If you become a victim of deepfakes or altered images, what do you do?

- Don’t search it.

Looking for a specific image or video can only make it appear higher up in search rankings, having the opposite effect.

- Contact the hosting site..

If an image or video of your likeness is posted anywhere online, contact the hosting site and ask for it to be taken down: Most comply voluntarily. For those that don’t, seek legal advice.

- Keep privacy private

Make sure that your social media settings are set up in such a way that your library of images aren’t visible to the public.

- Hire an expert.

Yes, Photoshopped images and deepfake videos can be spotted by experts – and if you’ve been a victim of either, the best course of action is to get in touch with an expert for an official opinion on the image.

If you are interested in learning more on this subject please look inside this eBook by clicking on the icon: